Design Principles

Collection: AI Platform

This is the first entry in a collection about building a custom AI harness and orchestration layer for autonomous project work.

You know the motion:

- Doing some work → Need some type of help

- Open a chat → Type a prompt → [wait] → Read the response (repeat)

- Copy/Paste response back into ‘working version’ → Continue work

Many people use “AI” like this today. It’s easy, and it works: you get answers and save time.

Changes are also happening at a rate we’ve never seen before. Models are getting better, responses are getting more robust. Companies are rapidly launching new products; others are integrating with AI tools. It’s showing up everywhere.

This is one of arguably THE largest technological transformations in our lifetime.

tldr

Good news: as these capabilities improve, AI becomes more accessible and easier to use for more and more people

Bad news: …this includes your competition!

I see a problem looming on the horizon, and pose this question:

“Is “chatting with AI” the way you plan to scale your business and compete?”Our inverted relationship with AI

For the last three years, I’ve struggled with this inverted inverted relationship with AI.

When working with clients new to AI, there is often this moment during design when they realize AI is not this ✨magical✨ thing that gets turned on and just starts doing their work.

tldr

“It’s not what I had imagined it would be like…”

“Today, I’m mostly asking AI questions and getting replies. It’s amazing how good it is.”

“I need to start automating tasks so it can do some of these things on its own.”

I, like many of my clients, have big expectations for what AI can do for me.

But, many non-technical, AI-curious users are somewhat stuck at the moment. They have access to this incredibly powerful tool, but…

AI only does things when they ask it.I’ve spent a lot of time thinking and building solutions around this limitation.

”How do I turn the tables and begin realizing automated benefits from AI?”tldr

Today’s models are powerful enough to be doing the work themselves.

Agents should be asking me to assist them …not the other way around.

I started putting together a wish list; things I want in an AI platform:

- a system that is NOT dependent on me to initiate every task

- “agents” that can do real work, not just answer questions

- …that I trust to make decisions on my behalf

- feels like having a real team working with me…

- …but NOT an inexperienced team I constantly need to babysit

- enables me to work on other things …

- allows me control and observability

tldr

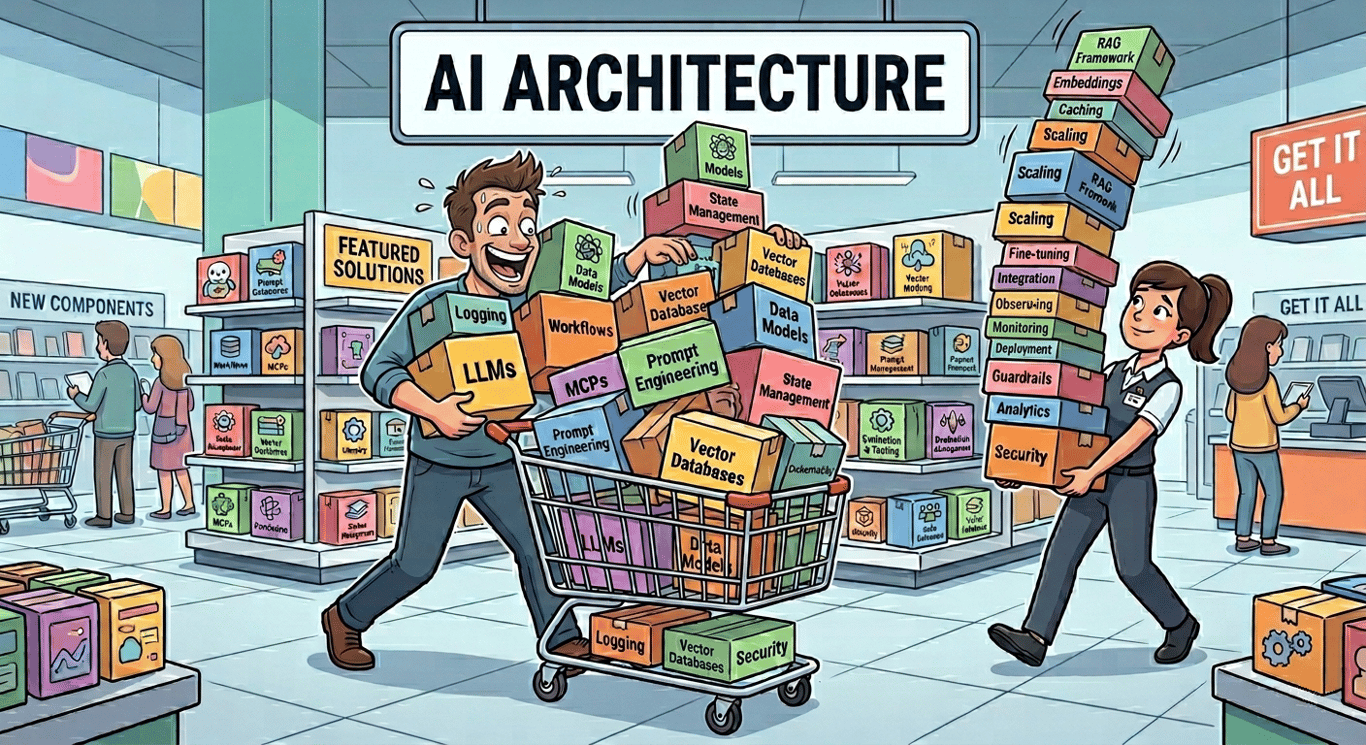

It’s difficult to find a single product or tool that will deliver on all of these requirements in an integrated, flexible, safe manner. There are a flood of products hitting the market, but (for me), too early to commit to any one of them. And…I don’t want to wait.

So, I built my own platform that does exactly what I want.

My Journey

This series shares my goals, struggles, and decisions. It’s unavoidably opinionated .

Your goals and experiences will be different.

I plan to cover why I built a platform, decisions and designs that evolved, and principles that I believe everyone should be considering.

Follow-up entries will go deeper on: orchestration pipeline, security, tooling, etc. and comparison to other tools, like Pi Coding Agent [foreshadow] and Anthropic’s new Managed Agents - which looks pretty cool.

It’s actually quite difficult to write about design decisions without explaining the reasoning behind them. A lot of thought went into balancing depth and length of this series. I hope you find value in it.

If you have any questions - just ask!

The chat ceiling

Like many, I started with tools like ChatGPT.

It was impressive what I could ask and it created. I started incorporating it in my personal and professional life in every way possible.

Unfortunately, the productivity gains hit a ceiling. I was constantly typing similar commands then copying/pasting content somewhere else to work with it.

I began looking for ways to speed up this process.

I quickly graduated to third-party apps like Msty, BoltAI, Open WebUI, LibreChat, etc. These tools allowed organization of reusable prompts and had placeholders to make them dynamic.

These were all great tools, each one doing a similar-ish thing. I quickly acquired a warehouse of reusable prompts, saved chats, and even different models for different needs, including using local/free open source models. All cool stuff…

But I was tethered to the screen, and I certainly wasn’t typing any less.

Context is Everything

By this point, people were figuring out that the up-front context you gave an LLM (in addition to the request) was directly proportional to the quality/accuracy of response you got out.

Organizations have instituted “better prompting” training. I’ve even seen organizations hiring “Prompt Advisors” to coach employees.

The problem with all of that… the real context usually lives outside the platform: either in your head or in another system / documents.

Attaching documents to every chat was cumbersome. Re-writing your department’s SOP is crazy.

We’ve also learned that loading too much information can have a reverse effect on output quality.

I wrote about this, AI’s “dumb zone”, and why context is so important to get right in Context Is Everything .

All of the tools I was using shared the same flaws as before:

- they were still just chat

- no context of who I was, what I was working on, etc. unless I told it every time

- difficult to control just the right info at the right time

- responses needed to be copy/pasted somewhere to be actually useful.

Nothing against chat

It’s worth a pause here to clarify…

Chat is the current standard for engaging with AI, and I’m not suggesting it is the problem. For general questions and requests, chat works great. But for bigger and on-going tasks, a better, more scalable interface is needed.

The biggest flaw chat has: it doesn’t scale. Chat requires YOU to write the prompt, YOU to read the responses, YOU to incorporate and YOU to review the work. (ALWAYS review the work!)

When you want AI to do more for you… YOU are the bottleneck!

Takeaway

There’s nothing wrong with chat.

Just remember, the moment you stop engaging… AI stop working too.

We want something better…

Bringing AI to the content

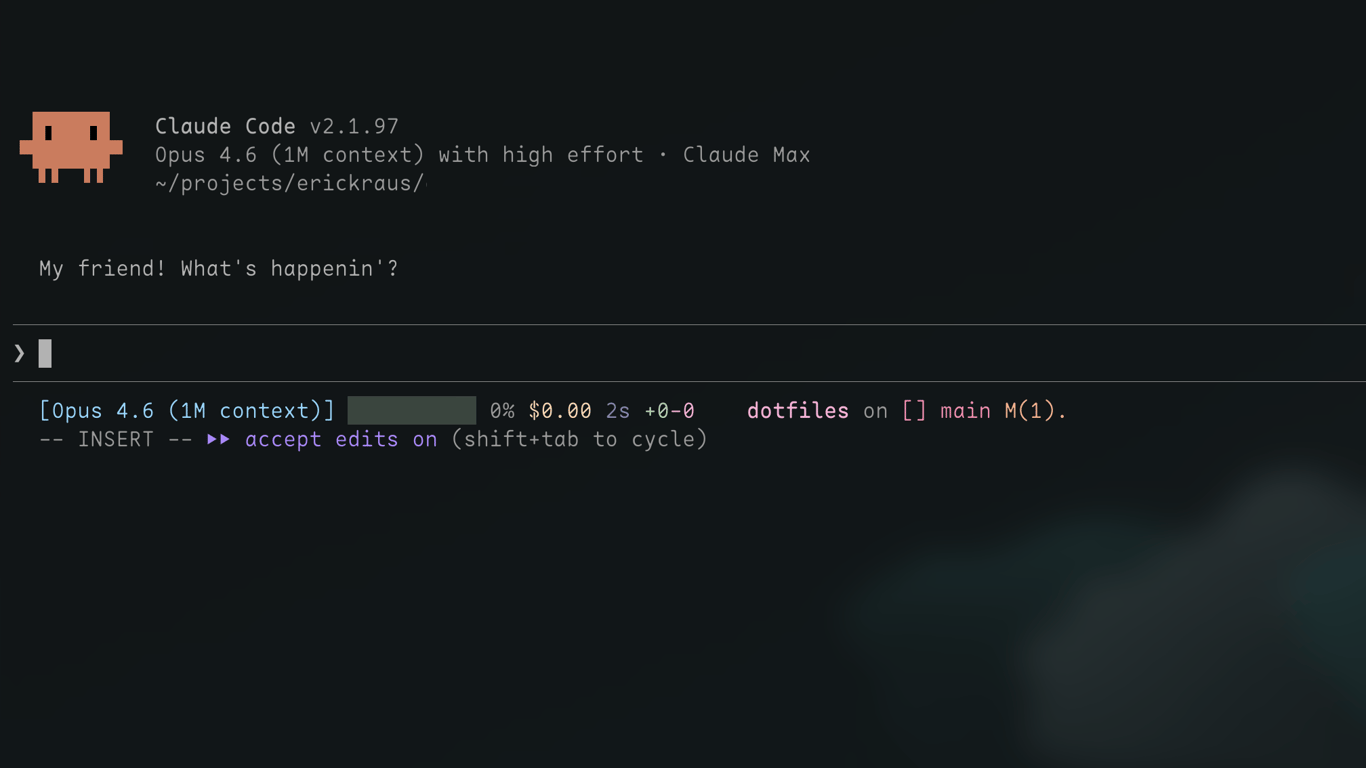

Claude Code released around February 2025. It changed the game. It allowed me to do two things I couldn’t before:

- Context: have AI work directly with content, reading and writing files

- Tools: write and run code to do things in a predictable way and connect to other systems

Adding context to a chat was now natural: Before you begin, read @path/to/file.

Also, a CLAUDE.md file could be created in any folder. When Claude works with files in that folder, it automatically reads that file, getting additional context about the directory, processes, standards to follow, etc. without me adding it to each request.

Tools unlocked predictable outcomes and data movement.

“I” built a local toolbox of Python scripts and third-party integrations that Claude Code could use to write Google Docs, update Salesforce, etc. Claude Code was now reading and writing the actual content I was working with AND helping me build even more AI/tools to expand. This was a major compound effect I hadn’t experienced before.

Big win: it eliminated a lot of the copy/paste tasks!

But yet…here I was, still in chat and, in a terminal.

Five chat windows meant five times the output… and five times the babysitting.

I was still the one deciding what to work on, initiating the discussion, checking for results, etc. The productivity gain was real, but still only scaled linearly with more of my time.

The unanswered question kept nagging me:

What does it take to go from chat to real autonomy ?Not just “AI-assisted” — but AI-driven.

At the time, nothing really existed in market. So I set out to build something.

My first real ah-ha moment

Things unlocked when I let Claude Code loose on my Obsidian “second-brain” notes.

A simple command now initiated Claude, instead of me, automatically every ~30 minutes.

Chat prompts morphed into process/workflow instructions:

- discover upcoming meetings (empty notes)

- read previous meetings for context/goals

- write prep notes based on goals. e.g. What questions should I ask?

- after meeting, review then summarize notes and transcripts

- re-qualify sales opportunities and write next steps

- what should I improve on in my next meeting?

This upgrade was real movement, and I have to admit there were some ‘perma-grin’ days in there.

The system was slightly fragile and hinged on watching the ‘state’ of content closely. Agents were reading/writing the same files I was working with. This is what I wanted, but the workflow had expectations built-in.

If a file didn’t look as expected, sometimes the agent made assumptions. It was like working with a teammate that did great work, but I couldn’t communicate with in real-time.

⊕ I actually had time to prepare and manually entered some notes …

⊖ Agent assumed it was placeholder and it was overwritten

⊕ I was too busy talking signature process with the client… didn’t take notes …

⊖ Agent updated deal “At risk” b/c: no notes meant ‘the client didn’t show up’.

tldr

Haha. There’s a reason why work isn’t completely automated today…

But, is that going to change soon? Probably faster than many would like.

Net-net: Was this still saving me time? Oh yeah!

It wasn’t ‘fully-hands off’, like many people are expecting with AI today. But there were also secondary benefits that often get over-looked: data completeness and quality in the responses were both crazy good, just needed to work through consistency.

Takeaway

At the time, models just weren’t capable yet to handle real-life ambiguity …yet.

The agents thrived on structured data and processes.

So that’s what I needed to adapt to: ensuring we both speak the same language.

You should be doing this...

I took a lot of learnings away from the Claude + Obsidian setup.

I HIGHLY recommend you experiment with something like this for yourself, if you haven’t already.

Tools have evolved since this experiment, too. Claude CoWork, with Scheduled skills, would be a much better starting point.

With new learnings in mind, it was now time to go bigger ! Let’s give agents what they want.

If I had asked people what they wanted, they would have said faster horses.

I did this so you could all learn a lesson

(that’s a lie)Unfortunately, what came next was a massive over-pivot on structure and control.

tldr

My experience so far was that agents thrived working within tightly controlled situations.

I also wanted to start building more new things with AI. This meant aligning with agents on why and exactly what & how things should work.

So, that’s what I set out to build: a fully deterministic orchestrator, structured workflows, schema validated models, and Jinja2-hydrated prompt templates.

The works. So, I could go hands-off.

In this system, every step would become prescribed, tuned, and managed. Every output structured and validated. This control meant I could tune and optimize every step from dynamic context injection to rubric-validated results.

Tasks were configured with files that looked like this.

feature:

name: "build-deal-qualifier"

pipeline:

- stage: plan

template: templates/plan.j2

validation: schemas/plan.schema.json

- stage: implement

template: templates/implement.j2

validation: schemas/code-output.schema.json

- stage: verify

template: templates/verify.j2

max_retries: 3

constraints:

allowed_paths: ["src/", "tests/"]

blocked_commands: ["rm", "git push --force"]

It took awhile, but came together, and worked very well. I’m proud of the work that went into this and the learnings were invaluable.

And, you guessed it: Agents loved it.

— But…

(I know someone reading this right now is laughing…)I hated it.

I was spending more time on the platform than on what it was supposed to be producing.

This was next-level babysitting. Every new task meant updating schemas, writing templates, and wiring up validations before an agent could do anything useful.

The deliverable wasn’t the platform… I had completely lost flexibility.

A different breed of model

About this same time, the Opus 4.5 model had launched - IMO, a truly ‘capable’ model. Along with a host of other features from Anthropic/Claude.

Rewinding the clock, Opus 4.5 probably would have handled my earlier meeting workflow/ambiguity like a rockstar.

But experiences to this point had taught me…

it’s more than just throwing a premium model or structured workflow at the problem.

I knew I needed to dial things way back, but not abandon the learnings I gained so far. I had all the pieces now - it just needed to be thoughtfully executed.

It prompted a new question to solve:

“What do you actually NEED to control in an agentic platform?”— and what can you leave up to the agent to handle…

Takeaway

Model is important. Control (right amount) is critical.

Provide outcomes and measure success on deliverables

Do Not: prescribe the steps an agent should take to complete a task

Control Permissions, Define Rules, and Monitor activities

Do Not: rely solely on instructions for non-negotiables e.g. permission

Create a system that works for your needs vs. simply adopting an ‘ecosystem’

Do Not: over-engineer a system which becomes work or rigid technical debt

Tip

To build what I now imagined, I created an /advisor skill which served two purposes.

It captured:

- vision and outcomes - what I needed it to do, rules, and what success looked like

- learnings - all the do(s) and don’t(s) I tried already

The skill was void of specifics around how I thought the system should be built.

Even though I had a lot of opinions by this point…

I think this is a trap people get into when using AI. LLMs tend to do better when you give vision and boundaries and let them find the path.

Case in point: The first deliverable was not the system, but five principles that every feature of the platform needs to operate from.

So that’s what I want to share, because I think these are far more important to a system than exactly what script runs where.

Five Principles for an Agentic Platform

These aren’t theoretical ideas. Nor can anyone say they are “best practices” (yet).

Each one exists because I got burned somewhere in a previous phase.

Work Is Driven From Tasks, Not Chat

If you're typing instructions into a chat window, you've made yourself the bottleneck.

Agents shouldn’t wait for humans to tell them what to do.

- if roadmap doc is needed before work can begin, an agent creates the roadmap

- if vision doc is needed before roadmap, that’s a human’s job; agents should ask for it

Several simple workflows can run on schedule to invoke agents to perform tasks.

It should happen continuously, automatically, all day.

The human’s job shifts from driving the work in chat to steering and approving it.

Humans and Agents still need a way to communicate. But this needs to be asynchronous.

Separation of Platform and Project

One size doesn't fit all. But unique for each project is management burdens.

An intentional architectural decision made early was to create a separation between the AI Platform and the Projects that it works on.

This supports several key things.

Highlights:

- promotes reuse of platform assets

- avoids duplication of platform assets embedded in projects

- allows versioning of Platform independent of Projects (and vice versa)

- easy granular permission control by agent, by project

- keeps platform apps & tools used to run the show separate and replaceable

Isolation Is Non-Negotiable

Humans and Agents working in same folders/on same files is recipe for conflict.

Agents make mistakes.

- They write bad code, they overwrite files, they go down rabbit holes.

- Humans write poor, ambiguous requirements and change their minds!

If two agents shared a workspace, one bad move could corrupt the whole thing.

Every file-writing task gets its own dedicated , independent copy of the project

Observe EVERYTHING That Happens

Asking agents to report what they did provides false security

Agents don’t always listen…(or they mean well, but forget to follow the rules)

A flavor of Principle 1 applies here too:

- Humans should not assume the responsibility of “keeping an eye on it”

- People will never do as good of a job as instrumentation - we have better things to do!

tldr

A task-worker (human or agentic) should never instrument their own work.

It’s self-serving.

If logging depends on the agent remembering to do it, you’ll have big gaps.

…or worse, it’s always looks “perfect”.

This is especially important paired with Principle 5, which introduces time budgets.

Give Lead Agents Budget & Mandate Delegation

An agent without a deadline will try to do too much.

Agents without a deadline can wander and burn resources trying to learn more than they need to about the request.

The key is to have the right context prepared for them, at just the right moment.

Lead agents are a strategic play to enforce human-imposed boundaries.

- Trying to do this orchestration yourself is burden

- Lead agents can do it better in real time

- Subagents should be given constraints they are managed to (by the Lead)

tldr

I’ve never experienced an agent operating with bad intentions.

Unmanaged though, they can refactor code that didn’t need it or add “a new feature you might like”.

Lead agents can manage subagents for you.

This should go without saying: Don’t let agents manage their own budget.

What’s Next

When I hear someone say “AI isn’t that good yet,” it’s often because the model hasn’t been given a fair chance to succeed.

AI isn’t ✨ magic ✨.

A solid framework is what makes magic

These principles can be applied regardless of your skill level.

The specific tools I use are all choices, not requirements.

The method and depth that you are able to implement will vary with the tools, but the concepts/benefits still hold.

Take what resonates, adapt it your own way, go build something awesome !

Closing Thoughts:

The value gap is no longer model capability — it’s implementation: context, control, and continuous-improvement.

AI can’t read your mind. But big opportunities are here.

Give a model the right information at the right time for the specific task… the results can be exactly what you want (consistently). Most of my platform is just solving for these things.

Next read is probably: v1 Implementation

Now, time for me to go move some of those issues to Ready!

Prefer content in your inbox?

I occasionally send out an email with site updates and other tech stuff. Zero Spam.

You'll get two emails initially: a confirmation link to verify, and a welcome message.

This site is protected by reCAPTCHA and the Google Privacy Policy and Terms of Service apply.